About Me

Hi! I am a Research Engineer at Gan.ai, helping machines speak!. Previously I was at Microsoft Bing Travel make their visual content make people go WoW ❤️. I am currently interested in using Deep learning to make awesome products.

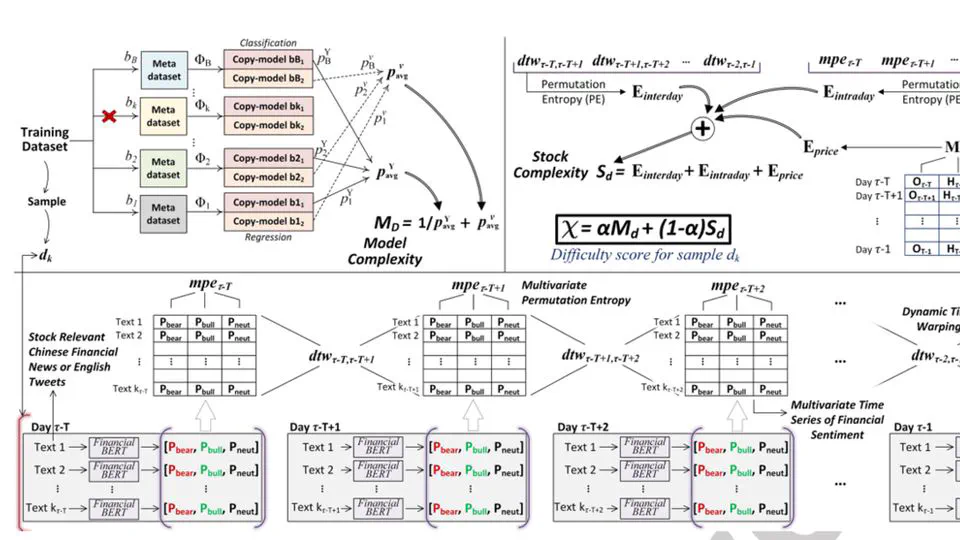

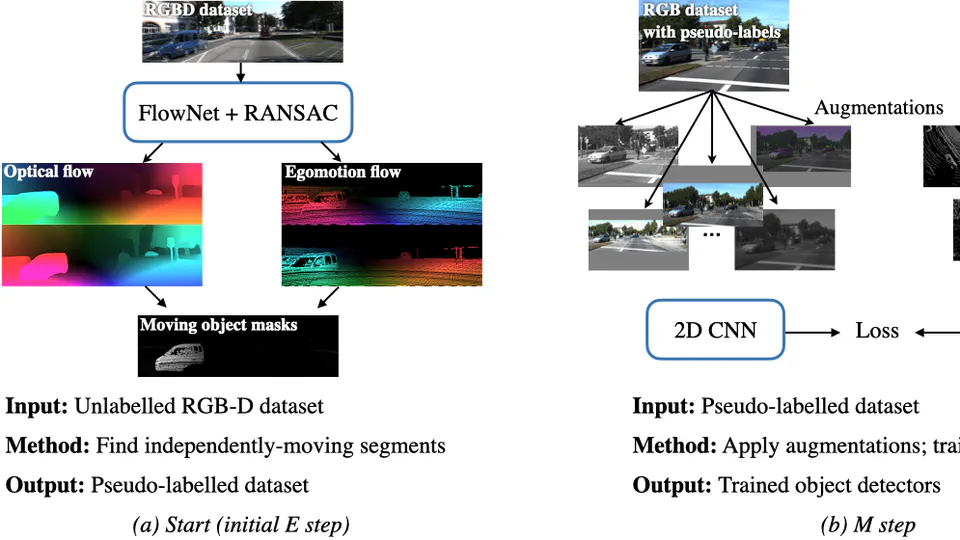

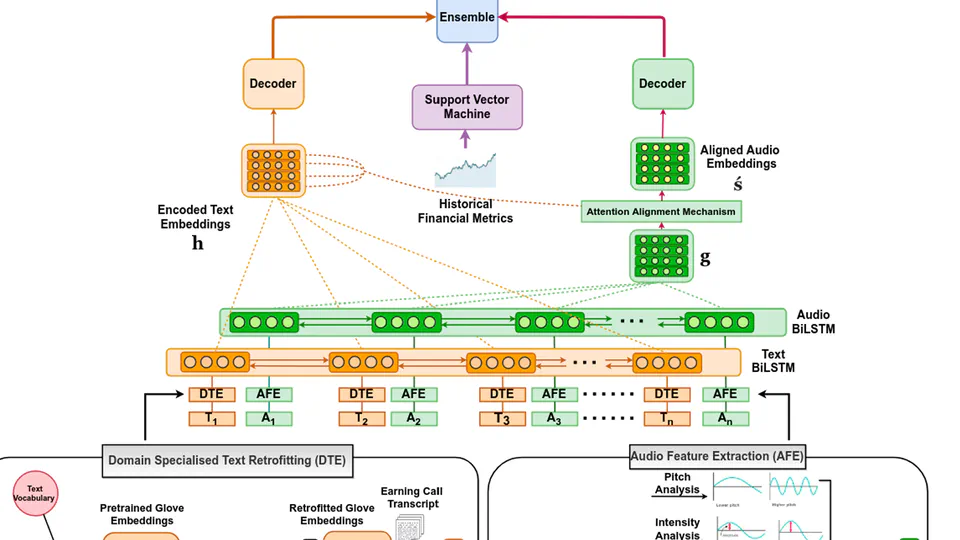

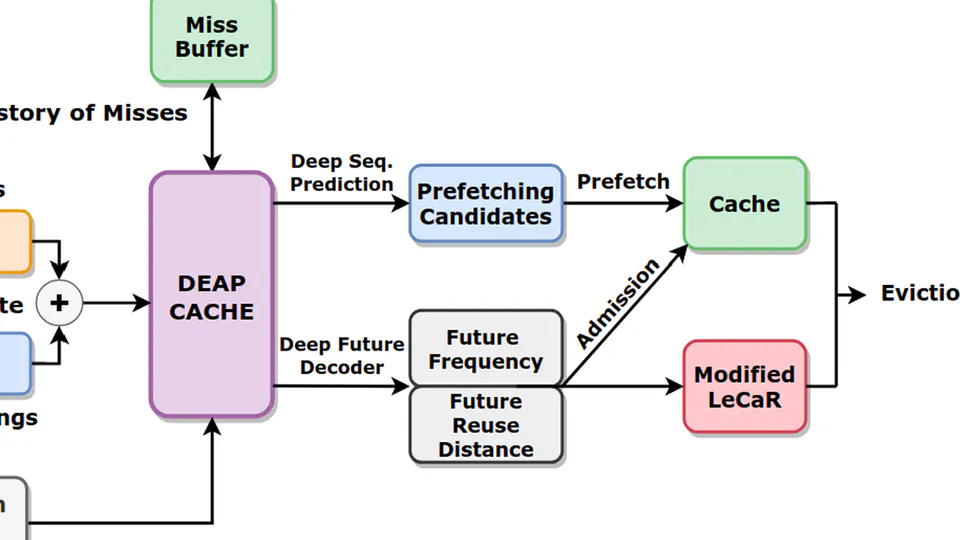

During my undergrad I had the pleasure of working in various academic research labs and startups on topics ranging from Computer Vision, to NLP, finance, reinforcement learning. I was also involved in leading various undergrad research groups, focusing on Deep Learning, Core CS, Quantum Computing and Blockchain.

Professional stuff aside, I am a die-hard anime fan, an avid reader, wannabe Musician/Writer/Youtuber? lol (checkout my YT channel and Medium!) and a lifelong learner who’s always up for a good conversation.

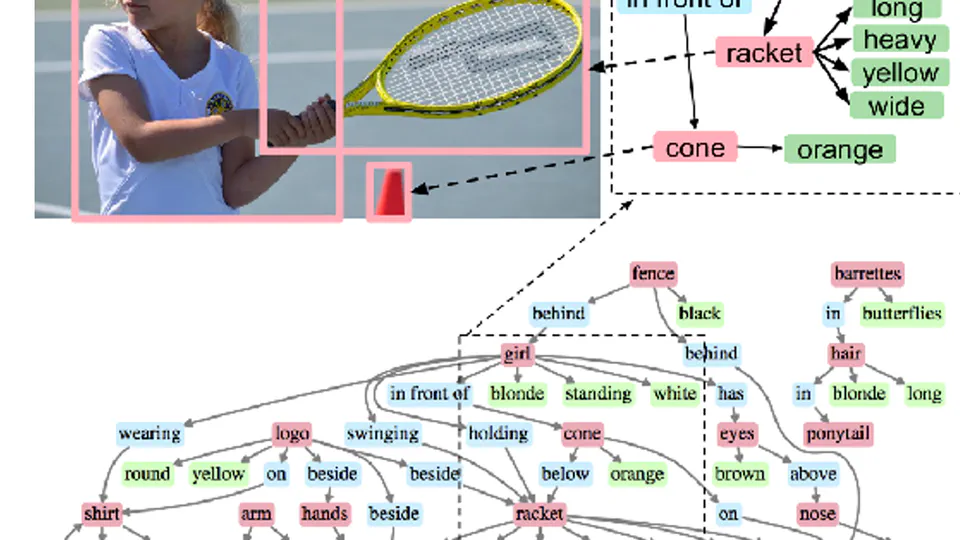

- Computer Vision

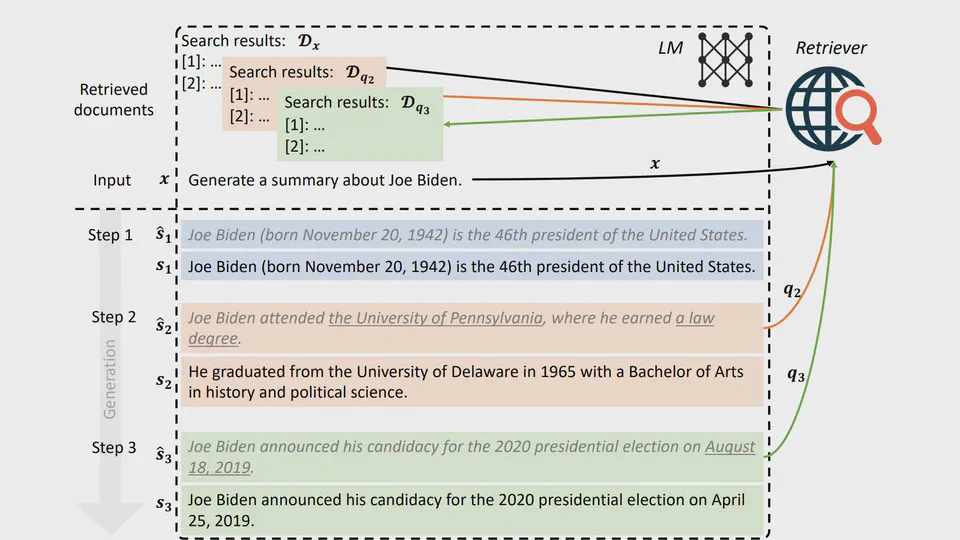

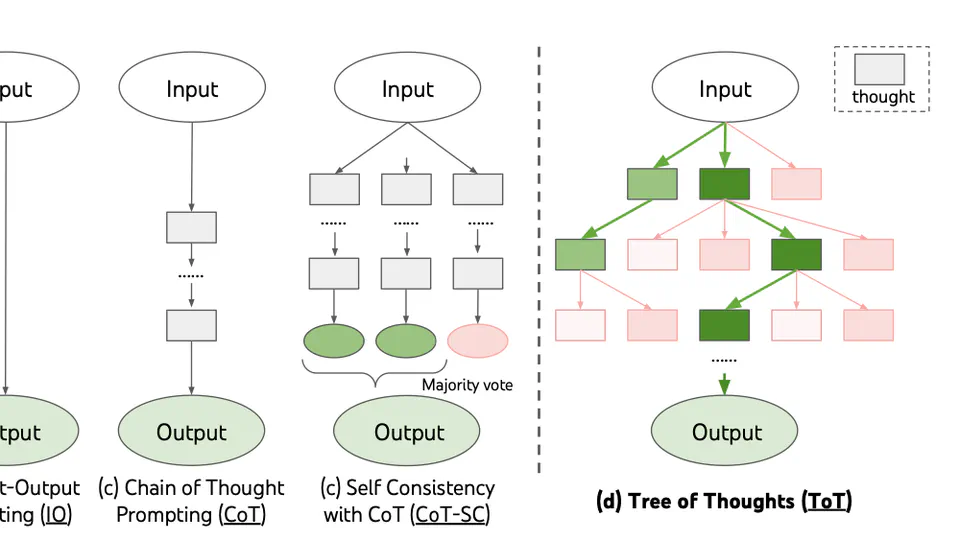

- NLP, MLOps

- Content Creation

- Anything and everything :P

B.Tech. in Computer Science

Indian Institute of Technology Roorkee